The Gang of Four, Their Software Patterned

One of the most famous episodes of Star Trek: The Next Generation is the second episode of the fifth season: “Darmok”. In it, the starship Enterprise and its crew have come across the Tamarians, an alien civilization whose language seems to stymie the show's conceit of a perfect “universal translator.” As the crew of the Enterprise ask the straightforward questions one would expect in a diplomatic context—”Would you be prepared to consider the creation of a mutual non-aggression pact between our two peoples?“—the Tamarians respond with translated but still incomprehensible statements filled with proper nouns and colorful imagery, like, “Shaka, when the walls fell,” or, “Temba, his arms wide,” or the phrase that provides the episode's title: “Darmok and Jalad, at Tanagra.”

As the episode progresses, with our protagonist Captain Picard and the Tamarian captain Dathon trapped together on the planet and struggling to understand each other, both Picard and his crew independently come to understand the guiding principle of these phrases: Tamarians communicate by something like imagery or metaphor, using well-known cultural allusions as shorthand for the situations they're in and the actions they're taking. The phrase “Shaka, when the walls fell” alludes to a famous historical disaster, and functions almost like a Tamarian swear-word, while “Darmok and Jalad, at Tanagra” alludes to a myth or folktale about two strangers who face a common threat and emerge as friends, and serves to represent the events of the episode itself, where Picard and Dathon face off against a strange beast and in the process bring their two peoples together.

In an article in The Atlantic—appropriately also titled “Shaka, When the Walls Fell”—Ian Bogost describes the events of the episode and provides an interesting analysis of the Tamarian language. In the process of doing a deep read of the episode's use of Tamarian, he rejects both “metaphor” and “imagery”, the words offered by the episode itself to describe the Tamarians' phrases, and instead offers two alternatives: “strategy” and “logic”.

“Strategy” is perhaps the best metaphor of all for the Tamarian phenomenon the Federation misnames metaphor. A strategy is a plan of action, an approach or even, at the most abstract, a logic. Such a name reveals what’s lacking in both metaphor and allegory alike as accounts for Tamarian culture. To be truly allegorical, Tamarian speech would have to represent something other than what it says. But for the Children of Tama, there is nothing left over in each speech act. The logic of Darmok or Shaka or Uzani is not depicted as image, but invoked or instantiated as logic in specific situations.

[…]

If we pretend that “Shaka, when the walls fell” is a signifier, then its signified is not the fictional mythological character Shaka, nor the myth that contains whatever calamity caused the walls to fall, but the logic by which the situation itself came about. Tamarian language isn’t really language at all, but machinery.

In the sort of hyper-pedantic fan spaces where Star Trek is popular, you sometimes see a criticism of the conceit of the episode: how could the Tamarians, with their florid allusions and indirect language, ever have been able to develop advanced technology like space-flight? The episode depicts their technology as superior to even the Federation's, but how could the Tamarians have built any of it without being able to issue a direct technical command like, “Connect these two pipes”? Bogost touches on this by contrasting the detail-by-detail bits of technobabble shown on the Enterprise with the terseness of Tamarian strategy:

As Troi explained, the Tamarians’ possess a sophisticated aptitude for abstraction. This capacity responds to fans’ skepticism at the Tamarian’s technological prowess. The Children of Tama would not be delayed by their inability to speak directly because they seem to have no need whatsoever for explicit, low-level discourse like instructions and requests. They’d just not bother talking about the socket wrench, instead proceeding to the actual work of building or maintaining the vessel.

[…]

While the episode doesn’t provide a Tamarian mythical equivalent [of a dense technical conversation], we can speculate on how the Tamarians would handle a similar situation. While I suppose the explicit directive to adjust thermal input by a specified amount might be rendered allegorically (some Tamarian speech is narrower than others), it’s equally likely that the entire exchange would be unnecessary, subsumed into some larger operation, say, “Baby Jessica, in her well.” The rest is just details.

Does this response mean that the fan criticism is invalid, and that the episode is “actually realistic”? Well, of course not: it's science fiction, and part of the fun of science fiction is to push past the bounds of realism and play around in the hypothetical and the near-real. There are still a myriad of ways to pick holes in the “realism” of “Darmok”, whether those holes are in the technology, the language, the culture, all of it.

But it can be powerful and rewarding to engage with science fiction by taking it at face value, accepting the what-if implicit in the premise, then working through what it would or could imply. That's how Bogost is engaging with the Tamarian language, by saying, “Well, what if this is how it worked? What then?” And the notion of a short phrase encoding not simply a referent but an entire approach, logic, and even world-view: well, isn't that a fascinating concept to consider?

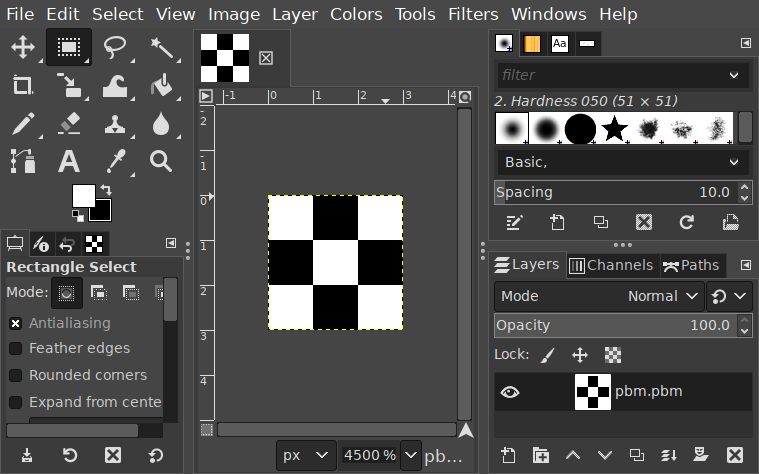

The notion of “design patterns” in software is typically associated with the book Design Patterns: Elements of Reusable Object-Oriented Software by Erich Gamma, Richard Helm, Ralph Johnson, and John Vlissides, a group also called the “Gang of Four”. This book provides the template for what many programmers imagine when they hear the words “design pattern”: the Visitor Pattern, the Command Pattern, the Abstract Factory pattern, and so forth.

Of course, design patterns didn't originate there: it's also relatively well-known that the originator of “design patterns” as a concept was the architect Christopher Alexander, in particular in the 1977 book A Pattern Language: Towns, Buildings, Construction which Alexander co-wrote with Sara Ishikawa and Murray Silverstein. This book is actually the middle part of a series, preceded by The Oregon Experiment (which describes the design of the University of Oregon campus) and followed by The Timeless Way of Building (which acts like a summary of the approach in the previous two books.) Alexander's patterns are techniques for designing spaces, ranging from region-level (like “Lace of Country Streets”) all the way down to tiny details (like “Half-Inch Trim”.)

However, there's still a gap in that history of patterns. Patterns were first brought to computing well before the Gang of Four. In 1987, Kent Beck and Ward Cunningham presented a paper at OOPSLA called Using Pattern Languages for Object-Oriented Programs.

A pattern language guides a designer by providing workable solutions to all of the problems known to arise in the course of design. It is a sequence of bits of knowledge written in a style and arranged in an order which leads a designer to ask (and answer) the right questions at the right time. Alexander encodes these bits of knowledge in written patterns, each sharing the same structure. Each has a statement of a problem, a summary of circumstances creating the problem and, most important, a solution that works in those circumstances. A pattern language collects the patterns for a complete structure, a residential building for example, or an interactive computer program. Within a pattern language, patterns connect to other patterns where decisions made in one influence the others. A written pattern includes these connections as prologue and epilogue. Alexander has shown that nontrivial languages can be organized without cycles in their influence and that this allows the design process to proceed without any need for reversing prior decisions.

Something notable which appears in both the original architectural treatment as well as the Beck and Cunningham treatment is the emphasis not just on patterns but on pattern languages: they're not simply approaches to writing or structuring some code, but families of approaches with interdependent motivations, rationales, and logics behind them.

This is apparent if you look at any of Christopher Alexander's patterns. Each of them (as Beck and Cunningham note above) is laid out in several rigorous sections, including a problem, a solution, and a note on usage, as well as sections of background and research. Here's a selected part of a pattern from the middle of the book called “Half-Open Wall”:

Problem:

Rooms which are too closed prevent the natural flow of social occasions, and the natural process of transition from one social moment to another. And rooms which are too open will not support the differentiation of events which social life requires.

Solution:

Adjust the walls, openings, and windows in each indoor space until you reach the right balance between open, flowing space and closed cell-like space. Do not take it for granted that each space is a room; nor, on the other hand, that all spaces must flow into each other. The right balance will always lie between these extremes: no one room entirely enclosed; and no space totally connected to another. Use combinations of columns, half-open walls, porches, indoor windows, sliding doors, low sills, french doors, sitting walls, and so on, to hit the right balance.

Usage:

Wherever a small space is in a larger space, yet slightly separate from it, make the wall between the two about half-open and half-solid—Alcoves, Workspace Enclosure. Concentrate the solids and the openings, so that there are essentially a large number of smallish openings, each framed by thick columns, waist high shelves, deep soffits, and arches or braces in the corners, with ornament where solids and openings meet—Interior Windows, Columns at the Corners, Column Place, Column Connections, Small Panes, Ornament...

The last section was written in a world before widespread digital hyperlinks, but it's remarkable how amenable to hyperlinking it is. (In fact, I confess I didn't retype the above from the book, I borrowed it from a web-based presentation of the patterns in the book which of course did the hyperlinking!) Each pattern references several other patterns, positioning it within a web of approaches with their own motivations and approaches and relationships.

Alexander's patterns are multi-level in a way that is self-reinforcing: using a pattern at the town level gives you scaffolding that supports the patterns shown at the neighborhood level, and in turn using patterns at the neighborhood level supports the patterns at the house level, and so forth. Alexander is explicit that this is a necessary part of how patterns are supposed to work:

In short, no pattern is an isolated entity. Each pattern can exist in the world, only to the extent that it is supported by other patterns: the larger patterns in which it is embedded, the patterns of the same size that surround it, and the smaller patterns which are embedded in it.

And for that matter, Alexander's patterns are also presented in order from large-scale to small-scale, deliberately describing the large-scale supporting patterns before the small patterns that reinforce them. Alexander remarks that the order is, “...essential to the way the language works.” Note too how Beck and Cunningham, in the passage I quoted, emphasize that a pattern language is, “...arranged in an order which leads a designer to ask (and answer) the right questions at the right time.”

Alexander also draws an explicit distinction between patterns that are the solution to a problem, where any possible solution to that problem must resemble the pattern given, and patterns which are a solution to the problem. Of the latter, Alexander even says

In these cases, we believe it would be wise for you to treat the pattern with a certain amount of disrespect—and that you seek out variants of the solution which we have given, since there are almost certainly possible ranges of solutions which are not covered by what we have written.

One reason this is important is because Alexander also puts emphasis on a specific word in the title: “A”. His opinion is that not only does each society have their own “pattern language” for the design of spaces, but that every individual within that society

[...] will have a unique language, shared in part, but which as a totality is unique to the mind of the person who has it. In this sense, in a healthy society there will be as many pattern languages as there are people—even though these languages are shared or similar.

Less loftily and more practically, Alexander suggests that projects can be improved by deciding on a specific pattern language to inform the project. The net goal of a pattern language, then, is that questions which might arise in the course of a design project have existing “default” answers, to the point that they barely need to be asked. After all, by drawing on an element of a pattern language, you are not just providing a solution to a single problem: you are contextualizing that solution within a web of other choices and approaches.

You might even say that, by invoking an element of a pattern language, you are invoking not simply a singular technique but an entire approach, logic, and even world-view.

If you're a programmer and you haven't read Alexander before, you may be noticing that this doesn't actually match up with your experience of patterns, well, at all. We certainly aren't using patterns to build software as advanced as the Tamarians' technology: we're often stuck with enterprise-level technology, and I don't mean the starship this time. Patterns are notoriously associated with the most tedious and unnecessarily verbose codebases out there, with “StrategyFactory” being a snarky shorthand for over-engineered code of all kinds.

The patterns put forward by the Gang of Four are, unsurprisingly, much less lofty in their goals. If you haven't read the book that introduces them, you may be surprised to discover that they are presented, in some respects, just as schematically as Alexander's patterns, starting with Intent and Motivation that work similarly to Alexander's Problem sections. However, the book contains a significantly smaller set of patterns—only 23 in total, an order of magnitude fewer than Alexander's 253—and they mostly sit within a fairly narrow abstraction band, with a few patterns vaguely gesturing at higher-level concerns (like the Chain of Responsibility Pattern) and the rest sitting firmly at the level of a few interacting objects or classes with their own state (like the Builder or Decorator or Iterator patterns.)

One of the most common criticisms I see of design patterns—and it's an old criticism, I associate it with Peter Norvig's 1998 presentation “Design Patterns in Dynamic Programming”, but I suspect it wasn't unheard-of even then—is that patterns as put forth by the Gang of Four are simply excessively-ornate workarounds for missing programming language features, and that patterns go away in well-designed programming languages. Norvig specifically describes some of the Gang-of-Four design patterns as “invisible or simpler” in dynamically typed languages, although some of his examples are less about dynamic typing and more about the existence of anonymous or higher-order functions.

A clear example here is the Command pattern used in object-oriented languages. A Command is an interface over executing pieces of code: you have an invoker, a piece of code which knows how to execute commands, as well as one or more commands, objects which implement an interface accepted by the invoker. This interface can consist of a single method: execute().

interface Command {

public void execute();

}

class Invoker {

static void invoke(Command cmd) {

cmd.execute();

}

}

class SayHello implements Command {

public void execute() {

System.out.println("Hello!");

}

}

public static void main(String[] args) {

Invoker.invoke(new SayHello());

}

It's also very easy to see why this pattern is “invisible” in other languages: it's just a callback! An invoker becomes a function which takes another function and calls it. The interface becomes the type of a function which takes no arguments, or disappears entirely in a dynamically-typed language. The ceremony is gone. Even keeping, for the sake of argument, a separate invoker and static types, we can see a fraction of the code is necessary in Python:

from typing import Callable

def invoke(cmd: Callable[[], None]):

cmd()

def say_hello():

print("Hello!")

invoke(say_hello)

I think this is a very valid criticism (and, even though I'm quibbling with some parts of it, I do think Norvig's presentation here is very good and more nuanced than I'm going into here) but I'm going to defend the Gang of Four in two ways here.

Firstly, the Gang of Four make it clear that commands are often not just a callback but may contain other information or scaffolding to support the command itself. You can easily imagine other data attached to a command—say, team ownership, or log information—but we don't even need to leave the book's text for an example, because the book comes with its own example: supporting “undo”. If you want to have first-class commands with an additional undo operation, then you need at least a little more structure than a simple callback. Even in Python or Lisp, an undo-capable command would need a bit of ceremony, and it's nice to be able to describe the approach as, “Using the command pattern,” instead of, “Storing a callback as a first-class value along with extra data necessary to support how we use that callback.”

But there's a larger defense of the Gang of Four patterns to be made here, which is, paradoxically, that Norvig is correct: these patterns do become invisible in the right context... and that's the point1.

The goal of learning a language, after all, is that with fluency the language itself ceases being an object of focus and instead becomes the medium by which other ideas are expressed. When you first learn a spoken language, you explicitly track the inflections and conjugations and declensions, you rack your brain for vocabulary words, and you consciously position your tongue in ways you never did before. The process of achieving fluency is making those things disappear, so that you no longer thing about the words and how to arrange them, you think about the ideas.

So if a design pattern becomes obvious or invisible, it doesn't strictly follow that it's worthless to name it or call it out as a pattern. Perhaps it means that the pattern is, so to speak, “correct”.

Despite the above vague defense of the Gang of Four, I do broadly agree that design patterns failed to lived up to their promise or potential in computing.2 They don't even live up to the original pitch that Beck and Cunningham gave: go back and read that passage and notice how many things they emphasize that are considered necessary by Alexander and yet are missing from the Gang of Four.

Perhaps the most pithy summary of my complaints is this: there are three words in the phrase “a pattern language”, and modern software development only remembers the middle one.

The first word, “a”, is important because it implies that other pattern languages can exist: that is to say a pattern is not part of “the pattern language”, but one of a selected set. There can be, should be, and are others!

Remember what Alexander said about his choice of the word “a”: each individual has their own language, overlapping but unique. That's not a concept which comes up in software patterns very much, if at all, but I think it's true. Your own approach to a problem can end up wildly different from mine, because the patterns we pull on in our own pattern languages are not the same.

For that matter, each project may have its own set of techniques which differ from other projects. It's often apparent, reading the code of a project, whether it was written with a consistent set of approaches or no. Establishing a pattern language for a project—even if people don't refer to this activity by name or do it formally—can make a project more consistent and readable and accelerate all future design work in that project, because every design question will have its default answer.

The last word, “language”, is important because language is both an interrelated system and a medium for communication. The power of pattern languages is that they give us a shared vocabulary for communicating interrelated concepts.

This speaks to the other failure of Gang of Four patterns: they're not a language, they're not multi-level and reinforcing, they're not presented in an order amenable to helping a person solve problems: they're just a grab-bag of techniques. There are large-scale, architecture-level design patterns (say, “Plugin Architecture” or “Model-View-Controller” or “Functional Core, Imperative Shell”) and there are also micro-scale design patterns (say, “Defunctionalization”3 or “Exit Early Instead Of Branching4”) and neither kind of pattern appears in the Gang of Four book. A proper software pattern language would assemble all its patterns into a cohesive, self-reinforcing whole.

Despite my bombastic framing here, I don't think design patterns will actually get us all the way—or even necessarily part of the way—to Tamarian levels of technology. But I do think design patterns, even as we have them in software today, have a little more to recommend them than the pop-culture idea of patterns might suggest, and I also think there's the potential for proper pattern languages, assembled with thought and care, to be a great way of both teaching and communicating about how to write and maintain good software5.

Also, make sure you read that Bogost article. It's very good.

- There's also a flip-side to this, which is that Norvig's thesis here is also compelling from the point of view of language design: what language features might make more design patterns “invisible”?

- It's worth noting, in fairness, that they haven't necessarily lived up to their promise in architecture, either: my understanding is that Christopher Alexander is more widely known and read by programmers with vaguely-defined multidisciplinary pretensions—yes, like me—than by architects.

- If you're not familiar with it, defunctionalization is, more or less, “Turn a lambda or callback into a non-lambda by separating code and data.” Underneath the hood, this is what enables lots of async code, where functions turn into state machines so they can be suspended, but it can be useful as an explicit transformation: for example, if you want take a data structure that contains a lambda, serialize it, and run it on another machine, then you can't simply serialize the lambda (at least in most languages) which means you need to defunctionalize it first.

- That is to say, in a language with early returns, you can return early instead of wrapping the entire body of the function in conditionals, which is in some languages considered good style because it establishes invariants for the function up-front, prevents rightward drift, and is often conceptually easier to read . I'd absolutely contend that this is a pattern on the same level as Alexander's “Half-Inch Trim”.

- I'll motivate this a bit with an example: Parse, Don't Validate is a design pattern. It's not necessarily described in the original post as a design pattern, and it's not typically presented as part of a pattern language, but it's a pattern nonetheless. I also think having this blog post and having a name for the approach has been wildly valuable not just in Haskell but in many other programming language communities. Not only do I wish I had that named concept when I was first learning to program, I think I would have found it valuable if I could connect it with other strategies along the same lines, and that's where a pattern language could come in.